The problem

← All articlesCode review blind spots

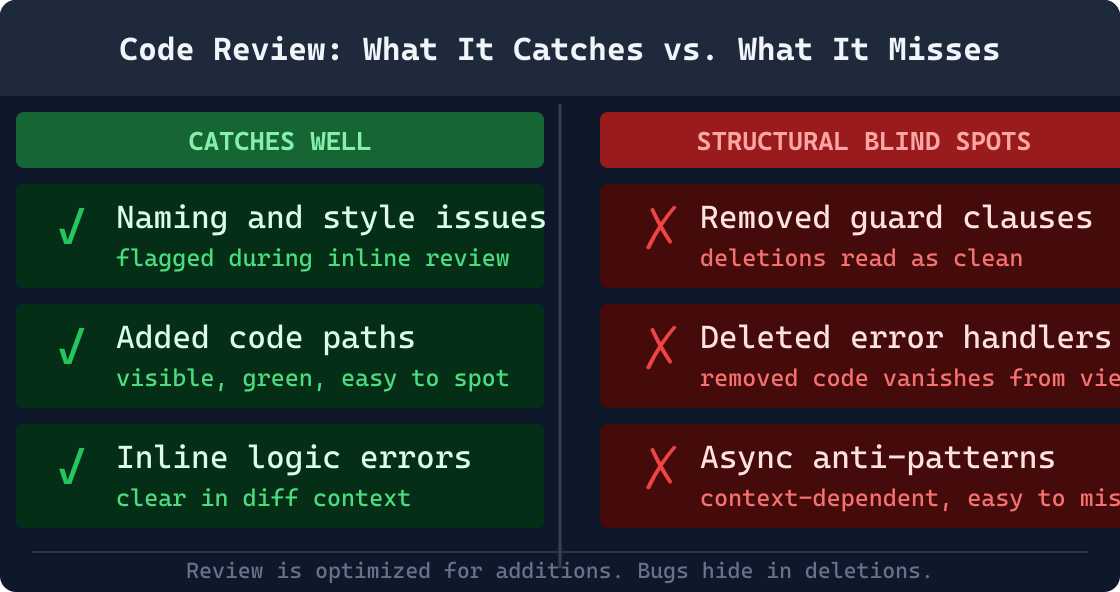

Code review is not a reliable safety net for behavioral risk. It is excellent at catching obvious errors and enforcing style. It is structurally limited at catching the changes that cause production incidents.

What the research says

The limitations of code review as a defect-finding mechanism are not a matter of opinion; they are documented in peer-reviewed research. Czerwonka et al. at Microsoft Research conducted a large-scale study of code review outcomes and concluded that code reviews are not an effective strategy for finding bugs.[1] Most defects found in review are style, naming, and readability issues. Functional defects, the kind that cause production failures, escape review at a high rate relative to their cost and their impact on users.

Bacchelli and Bird's foundational 2013 study of modern code review documented a significant gap between what reviewers intend to accomplish and what they actually accomplish. Reviewers report that finding bugs is a primary goal of the review process. When outcomes are measured, the dominant finding category is style and understanding-related comments, not defects. The expectation that review will catch behavioral regressions is not consistently supported by the data from teams that have measured it.[2]

Czerwonka et al. (ICSE 2015): "Code reviews at Microsoft are mostly used to improve code and find alternative solutions, not to find bugs."[1] The study documented that fewer than 15% of review comments address actual functional defects. Subsequent replications report similar patterns at different organizations, though rates vary by team size, domain, and whether structured checklists are used. Counter-evidence exists: teams that enforce mandatory security checklists and strict PR size limits report meaningfully higher defect-detection rates in review, per Bacchelli and Bird (2013).[2]

McIntosh et al. further documented that review coverage, the percentage of changes that receive any review at all, correlates with long-term software quality outcomes.[3] But coverage and rigor are not the same thing. A PR can receive a review that is technically thorough on the lines present while completely missing a removed guard clause or an implicit contract change. The structural blind spots of review exist independent of reviewer effort or reviewer skill.

Review was designed for a different problem

Code review was designed for human oversight of intent and readability. It answers the question "does this code do what the author intended?" effectively. It answers the question "does this change break an assumption made somewhere else in the system?" poorly, because the second question requires exhaustive structural analysis of the diff, not human pattern recognition under time pressure with incomplete knowledge of the full system.

Code review asks a human to read code under time pressure with incomplete runtime context. That human brings domain knowledge, intent verification, and design judgment that no tool can replicate. The same human cannot reliably detect that a removed line was the only guard between a valid state and a NullReferenceException surfacing three months later. Detecting that requires exhaustive analysis of what was removed. Not comprehension of what remains.

Confirmation bias compounds this structural limit. A reviewer scanning new code for correctness is primed to verify that the additions achieve their purpose. That mental mode makes it less likely: not more: that the same reviewer will notice what disappeared. "Does this code do what it should?" and "what used to happen on this code path?" are different cognitive tasks. Review concentrates attention on the first. Detecting removed behavior requires a deliberate audit of deletions that most reviewers do not perform systematically, because finding bugs was never what review was designed to do.

Behavioral drift, contract changes, and removed safety checks are structural properties of a diff. Detecting them requires rule-based analysis of what was removed, not the holistic comprehension that humans do well. This is the gap automated pre-commit analysis closes.

8 categories review consistently misses

These are not exotic edge cases. They are the most common root causes of production regressions in codebases that have active, well-intentioned code review processes. The existence of review does not protect against them in any reliable way.

Reviewers see what is there, not what was removed

Diffs show additions in green and deletions in red, but human attention naturally gravitates toward the new code being introduced. A removed null guard, a deleted validation step, or a missing error handler is easy to miss in a large diff because the new code path looks complete; it just no longer handles the cases the deleted lines covered. Research by Bacchelli and Bird found that reviewers focus primarily on the correctness of additions and rarely audit deletions with the same rigor.[2] This asymmetry means that behavioral regressions caused by removed defensive code are among the most common review misses in practice, and they appear in postmortems with a note that reads 'passed code review.'

Example

A null check before a repository write is removed during a refactor. The diff shows 40 lines of clean new code. The deletion is on line 8 of a 50-line hunk. Three reviewers approve. The first null input in production throws a NullReferenceException in a code path that was safe for three years.

Context switching limits depth

A reviewer working through a 400-line diff across 12 files cannot hold the full behavior of every changed function in working memory. Review depth degrades sharply with diff size; studies from Microsoft Research have documented this effect directly, showing that reviewer effectiveness declines as PR size increases beyond roughly 200 lines. The most risky changes are often buried in the middle of a large PR where attention is thinnest and reviewer fatigue is highest. Splitting large PRs helps, but many teams merge large PRs because the cost of splitting them feels higher than the perceived risk of missing something in review.

Example

A 600-line PR touches an auth middleware, a data access layer, and three API controllers. The critical change, a removed authorization check in one controller, is on file 9 of 12. It receives 4 seconds of review time and three approvals.

Implicit contracts are invisible in the diff

When a method changes its parameter type or a public interface removes a member, the reviewer verifies that all call sites within the PR were updated. But external consumers (serialized payloads in a database, client SDKs, stored procedures, or microservices calling the changed API) are outside the diff entirely. These implicit contracts exist in the runtime, not in the code under review, and no amount of careful reading will surface them. McIntosh et al. found that API contract violations are disproportionately represented in post-merge defect reports relative to their frequency in code, suggesting that reviewers systematically underweight this category of risk.[3] The only way to catch implicit contract breaks is to analyze what the change removed from the public surface, which requires structural analysis of the diff rather than comprehension of the new code.

Example

A DTO property is renamed from 'userId' to 'user_id' for naming consistency. The PR updates all server-side references. A mobile client serialized against the old field name silently reads null for three weeks before anyone reports an issue.

Async and concurrency risk is structurally invisible

An async void method, a .Result call on a Task, or a static mutable field accessed without synchronization looks like syntactically normal code to a reviewer who is not specifically scanning for concurrency anti-patterns. These issues require both specialized knowledge and deliberate, focused attention; they cannot be caught by a reader scanning for logical correctness in the usual sense. They rarely appear in checklist-driven reviews because most review checklists target obvious correctness, not threading semantics. In .NET specifically, the deadlock-by-.Result-on-async-method pattern is well documented as a category of bug that code review almost never catches before production exposure, because the code looks identical to correct synchronous code until it is running under real load.

Example

A service method is converted to async but one caller is not updated and adds a .Wait() call on the returned Task. In tests the call completes immediately. Under real load the ThreadPool starves and the service deadlocks intermittently with no clear error message.

Social pressure compresses review time

PRs that have been waiting in the queue get approved faster as reviewers feel social pressure to unblock colleagues. PRs from senior engineers or tech leads receive less critical review than PRs from junior developers, a pattern observed in qualitative studies of peer review dynamics, including Bacchelli and Bird's 2013 fieldwork at Microsoft.[2] PRs marked urgent, blocking a release, or associated with a deadline get approved without detailed review. That is precisely when the risk of a shallow review is highest. This dynamic is not a failure of individual discipline; it is a structural property of any human review process under time pressure. The social signal of 'approved' is not a reliable proxy for the technical signal of 'safe to merge.' They diverge most sharply precisely when rigorous review matters most.

Example

A critical hotfix PR with five changed files gets three approvals in 11 minutes on a Friday afternoon before a release. One of the changed files removes a rate limiting guard that was preventing a known abuse pattern from reaching the database.

Security patterns require specialized, active awareness

Spotting SQL injection risk, identifying a weak cryptographic primitive, recognizing a PII field being written to a log statement, or noticing an authorization gap in a new endpoint requires the reviewer to be actively in a security-focused mental mode for the duration of the review. Most reviewers are not in that mode for every PR, because sustaining that level of vigilance across all review tasks is cognitively expensive. The Boehm and Basili defect cost model shows that security defects found after deployment cost orders of magnitude more to remediate than defects found during development, yet the review process provides no structural mechanism to guarantee that security awareness is applied consistently to every change.[4] Security-specific automated analysis, which applies the same rules every time with no fatigue, is a structural improvement over relying on reviewer attention alone.

Example

A new logging statement is added for debugging: logger.LogInformation('Processing request for user with token: ' + request.Token). The token is a credential. It ships to production and is indexed by the logging platform, accessible to everyone with log read access.

Test coverage gaps are not visible in the diff

A reviewer can see that new code was added, but cannot easily determine whether the existing test suite exercises the new or changed code paths in a meaningful way. Coverage tools exist, but they are rarely consulted during review, and they measure line coverage rather than behavioral coverage of the specific delta introduced by the PR. A reviewer approving a change that adds a new error handling branch has no fast way to verify whether a test exercises that branch specifically, whether existing tests happen to hit it incidentally, or whether the behavior is entirely untested. This blind spot compounds the structural gap described in more detail in why tests miss bugs; the two mechanisms that were supposed to catch regressions have overlapping blind spots, not complementary ones.

Example

A new branch is added to handle HTTP 429 responses from a downstream service. It looks correct on review. No test covers it specifically. The upstream service starts returning 429 three months later and the new handler throws because a variable was not initialized in that code path.

Configuration and environment changes escape behavioral review

Changes to connection strings, feature flags, environment variable names, default timeout values, or dependency injection lifetimes look innocuous in a diff and are rarely reviewed with the same attention as logic changes. But these changes can alter the runtime behavior of the entire application in ways that no amount of code correctness analysis will reveal. A renamed environment variable silently falls back to a default value. A changed DI lifetime turns a scoped service into a singleton and causes state to leak across requests. These categories of change are structurally invisible to reviewers because their effect only appears at runtime; the code reads correctly, it compiles cleanly, and all tests pass, but the system behaves differently in ways that only manifest under realistic conditions.

Example

A service registration is changed from AddScoped to AddSingleton to address a perceived performance concern. The service holds per-request state internally. In tests each request is isolated. In production, state from one user's request bleeds into the next user's request.

A concrete .NET example

Consider a common refactor in a .NET service layer. A developer is simplifying an async method and removes what looks like redundant guard code. The diff looks clean and the new code is idiomatic .NET 6+. Every reviewer sees the new code as correct. What they will not notice is what was removed and what contracts that removal breaks.

UserService.cs -- staged diff

@@ -38,14 +38,9 @@

public async Task<UserDto> GetUserAsync(int id, CancellationToken ct)

{

- if (id <= 0)

- throw new ArgumentOutOfRangeException(nameof(id), "Id must be positive.");

- if (ct.IsCancellationRequested)

- ct.ThrowIfCancellationRequested();

var entity = await _repository.FindByIdAsync(id, ct);

- if (entity == null)

- throw new NotFoundException("User not found.");

+ ArgumentNullException.ThrowIfNull(entity);

return _mapper.Map<UserDto>(entity);

}

GCI0001: Removed guard clause -- ArgumentOutOfRangeException on id <= 0 is no longer thrown. Negative ids now reach the database layer.

GCI0014: Exception type changed from NotFoundException to ArgumentNullException. Callers catching NotFoundException will not catch this path.

The new code is syntactically correct and idiomatic. ArgumentNullException.ThrowIfNull is the recommended .NET 6+ pattern. But two behavioral contracts were broken: negative id values now reach the database layer instead of being rejected early, and all callers that catch NotFoundException will see an uncaught ArgumentNullException in the new path. Neither issue is visible from reading the green lines. Both are immediately visible from analyzing what was removed.

This is the class of change that appears in production incident postmortems with the note "passed code review." The reviewer was not negligent. The diff structure did not make the risk visible. Automated diff analysis is designed specifically to close this gap by applying the same structural rules every time with no fatigue and no context switching.

How PR size amplifies every blind spot

Every blind spot described above gets worse as PR size increases. The relationship between diff size and review effectiveness is not linear; it degrades sharply beyond a threshold. Microsoft Research shows reviewers maintain effective attention for roughly 200 to 400 lines of diff. Beyond that, the cognitive load exceeds working memory capacity. Reviewers shift from line-level analysis to impression-level judgment.

Teams that enforce strict PR size limits see measurably better review outcomes. But size limits do not eliminate the structural blind spots; they reduce the probability that any specific reviewer misses a specific item. A reviewer carefully reading a 100-line diff will not notice that a removed line was the only error handler for that code path. Smaller diffs reduce probability. They do not change the mechanism.

Under 200 lines

Reviewers maintain reasonable attention. Structural issues still escape, but the probability is lower and reviewers have more bandwidth to notice what was deleted.

200 to 400 lines

Review quality begins to degrade measurably. Reviewers spend more time understanding the overall change and less time auditing individual lines. Deletions are increasingly overlooked.

Over 400 lines

Research documents significant decline in defect detection rate. Reviewers approve based on overall impression. The structural blind spots dominate over any individual finding.

The cost case for catching issues earlier

Boehm and Basili's foundational work on defect cost across the software lifecycle established that defects found after deployment cost substantially more to fix than defects found before coding is complete, with multipliers of 10x to 100x depending on system type and defect category.[4] This ratio is widely cited, but the implication for code review is rarely stated explicitly: if review misses a defect that was present at commit time, every hour between commit and detection multiplies the cost to fix.

A defect caught by pre-commit analysis costs the developer seconds: read the finding, fix the line, re-commit. The same defect caught in code review costs the author a context switch, a new commit, a re-review cycle, and potentially a re-run of CI. The same defect found in production costs an incident response, a root cause analysis, a hotfix branch, an emergency deploy, and postmortem documentation: hours to days of engineering time for a finding that could have been fixed in under a minute at commit time.

This is not an argument that code review should be removed. It is an argument that code review should be the second line of defense, not the first and only line. Reviewers focus best when the structural work is already done.

Pre-commit

Seconds to fix

Code review

Minutes to hours

Post-deploy

Hours to days

Relative cost of remediating the same defect at different stages. Source: Boehm and Basili, 2001.[4]

Automation is not a replacement for review

Code review provides intent verification, domain knowledge transfer, mentorship, and team alignment that no tool can replicate. When a reviewer asks "why did you choose this approach instead of the existing pattern?" they are exercising judgment that has nothing to do with detecting removed null guards. These two functions should not compete; they should be layered.

What automated diff analysis can do is close the structural blind spots: the patterns that require exhaustive analysis of what was removed and changed, not human comprehension of what the code does. Running automated checks before the PR opens flags behavioral and structural risks that reviewers are likely to miss. The goal is not to remove review. The goal is to ensure that by the time a PR opens, structural risks are already resolved.

When reviewers do not have to scan for async anti-patterns, missing null guards, removed error handlers, or implicit contract changes, they can spend their full attention on what humans do best: verifying intent, catching design problems, and sharing context about the system.

Review does not become less valuable. It becomes better spent.

Other automated tools address overlapping but distinct surfaces. CodeQL and GitHub's code scanning target security vulnerabilities. Linters enforce style rules and common anti-patterns. Type checkers verify contract conformance within a single build boundary. These tools are valuable and should run alongside structural diff analysis. None are designed to detect removed guard clauses, changed exception types, or deleted null checks: the behavioral drift patterns that structural pre-commit analysis specifically targets.

Related reading

Tests pass but bugs still reach production. The categories of risk that escape test suites and why a green build is not the same as safe code.

How analyzing only the changed lines, rather than the whole codebase, produces faster, lower-noise findings that are directly actionable at commit time.

References

- Czerwonka, J., et al. "Code Reviews Do Not Find Bugs: How the Current Code Review Best Practice Slows Us Down." ICSE Companion 2015. Microsoft Research. https://www.microsoft.com/en-us/research/publication/code-reviews-do-not-find-bugs/

- Bacchelli, A. and Bird, C. "Expectations, Outcomes, and Challenges of Modern Code Review." ICSE 2013. ACM Digital Library. https://dl.acm.org/doi/10.5555/2486788.2486882

- McIntosh, S., et al. "The Impact of Code Review Coverage and Code Review Participation on Software Quality." MSR 2014. ACM Digital Library. https://dl.acm.org/doi/10.1145/2597073.2597076

- Boehm, B. and Basili, V. R. "Software Defect Reduction Top 10 List." IEEE Computer, 34(1), January 2001. Pages 135 to 137.

Eric Cogen -- Founder, GauntletCI

Twenty years in .NET production. Most of those years, the bugs that hurt me were not the ones tests caught. They were the assumptions I did not know I was making: a removed guard clause, a renamed method that still did the old thing, a catch {} that turned a page into a silent dashboard lie. GauntletCI is the checklist I wish I had run before every commit. It runs the rules I learned the hard way, so you do not have to.